Expose secure AI endpoints powered by your own hardware

Turn your Linux and macOS hardware into an OpenAI and Anthropic-compatible AI platform. Route traffic across your own compute, compose MCP tool stacks, and stop paying the big vendors for hardware you already own.

Join Early Access

How it works

Bring your own hardware, deliver cloud-grade AI APIs

A practical rollout path from private model hosts to secure, compatible, and observable inference endpoints.

Step 1

Connect your hardware

Install the Infersec conduit on Linux or macOS hosts connected to your model runtimes.

Step 2

Compose routing rules

Define routing by latency, source health, fallback order, and endpoint-level policy.

Step 3

Publish secure endpoints

Expose OpenAI and Anthropic-compatible endpoints so existing SDKs work immediately.

Step 4

Operate with telemetry and zero retention

Ship logs, traces, and metrics to your preferred sinks while keeping prompt and tool-call content on your own hardware.

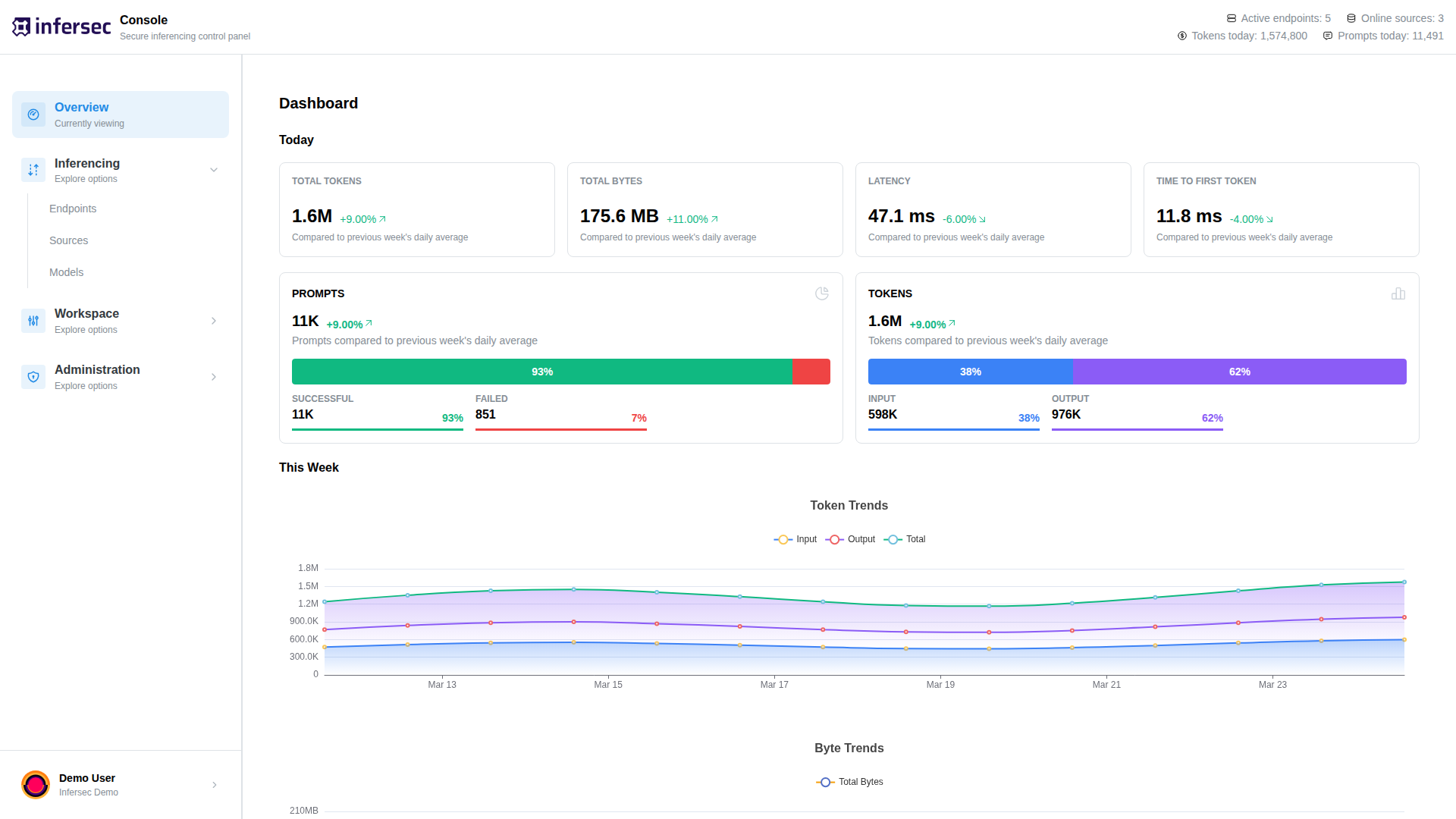

Control plane for secure, composable AI delivery

Connect private hardware, expose compatible APIs, route intelligently — own your AI stack end to end

OpenAI & Anthropic-compatible endpoints

Drop-in support for existing SDKs and clients without protocol rewrites.

Connect Linux and macOS hosts

Run conduit workers on your own machines and keep model execution private.

Intelligent routing with failover

Session stickiness, load balancing, priority routing, and automatic offline-source detection across your inference fleet.

Self-hosted MCP gateways

Expose MySQL, Postgres, and MariaDB through scoped MCP gateways — attach tool servers per endpoint with policy-aware access controls.

Privacy-first by design

No prompt logging, no tool-call content storage. Your data stays on your infrastructure in self-hosted deployments.

Pluggable telemetry

Ship logs, traces, and Prometheus-format metrics to your existing observability stack.

Server-side tool calling

Tool calls are intercepted and executed on the server via MCP tool servers — results are fed back into the conversation automatically, transparent to the client.

European provider

Infrastructure and data handling operate under EU regulations. No data leaves European jurisdiction.

Affordable usage-based pricing

Pay only for what you use — per token and per connected source hour. No fixed fees, no minimums.

Route AI requests intelligently across your hardware

Setup a public-facing, secure API that utilises your own hardware as LLM compute. Choose from tens of thousands of models to download and run to power your API — use it to power your coding agents, agent pipelines or chatbots — all with compatible OpenAI and Anthropic API formats.

Read docs

Privacy-focused, Europe-first

Prompts and tool call payloads are never logged or stored, and no data is ever used for training purposes. You maintain control of your stack, end-to-end.

Composable Tool Services

Build composable tool services with custom methods that can be attached to any endpoint for server-side tool execution, or exposed publicly and securely for external MCP integration. Mix and match tools to create powerful, reusable service stacks tailored to your workflows.

Compatibility matrix

Integrate with your current stack, no protocol rewrite

Infersec is built for teams that need cloud-facing AI endpoints while keeping model execution and policy ownership on their own infrastructure.

| Surface | Support |

|---|---|

| API compatibility | OpenAI Chat Completions + Anthropic Messages |

| Hardware agent OS | Linux and macOS worker hosts |

| Inference sources | Local runtimes and remote providers through one route policy |

| Telemetry | Prometheus-format metrics, pluggable sinks for logs and traces |

| Privacy | Zero prompt and tool-call logging by default |

| Model sources | Download and serve Huggingface models directly |